Walking on two legs looks easy because you have done it for decades without thinking. It is, in fact, one of the hardest problems in robotics. Roboticists have been working on it since the 1970s. We are still not done.

Table of Contents

Here is the honest version of how a 2026 humanoid robot actually learns to walk — not the marketing version, not the science fiction version, but the real combination of machine learning, simulators, and old-fashioned engineering that produces a Unitree G1 walking calmly across your trade show booth.

Image source: Reddit / classic Honda ASIMO stair tumble compilation

The first thing every humanoid robot does is fall. Sometimes for months. Engineering teams budget for it. The robot fall is not a bug — it is the start of the curriculum.

This is part of why early humanoid teams kept their work behind closed doors. The first hundred attempts at walking ended on the floor. The early Honda ASIMO programs in the 1990s reportedly burned through dozens of test units before producing the controlled walks that became famous in 2000s demos. The early Boston Dynamics Atlas units fell so often that the engineering team built recovery routines just to spare the cost of constant repairs.

Falling is information. Each fall tells the system something specific — which joint failed, which sensor was slow, which surface confused the balance estimator. Over time, the falls get rarer. The walk gets steadier. The robot stops being scared of the doorway. None of this is magic. It is iteration.

Imitation Learning: The Robot Watches a Person

The fastest way to teach a robot a new motion is the same way you teach a kid: show it.

Imitation learning is the technique. A human performs the motion — walking, waving, picking up a flyer, posing for a photo. The motion gets captured by sensors, cameras, or a motion-capture suit. The robot’s controller learns to produce its own version of the same motion using its own joint configuration.

This works well for short, structured movements. The wave that a humanoid performs at your trade show was probably trained by a human waving in a motion-capture room six months earlier. The pose. The greeting. The hand-off of a microphone. All taught by demonstration.

What it does not do well: novel situations the demonstrator never showed. The robot can imitate a wave perfectly. It cannot extrapolate the wave to a wave-while-walking-up-a-ramp without separate training. Imitation is a shortcut, not a generalization. Modern robotics teams pair imitation with the next technique to fill in the gaps.

Image source: NVIDIA / Isaac Lab reinforcement learning training reference

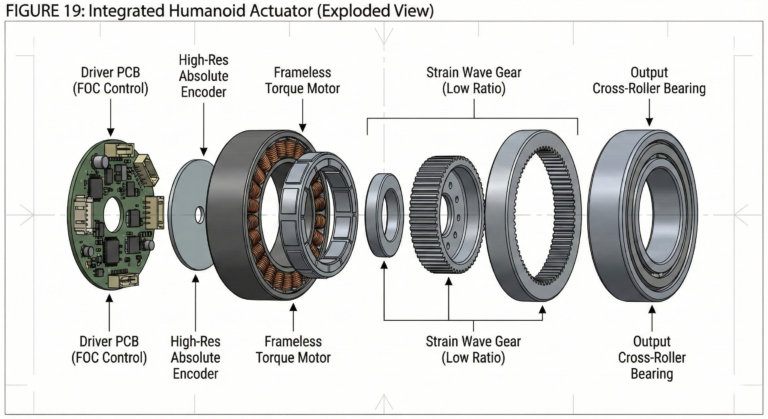

Reinforcement learning is what makes 2025 and 2026 humanoid robots different from the 2010s generation. It is also the technique that took the longest to make practical.

The idea is simple. The robot tries something. If the result is good (it stayed upright, it reached the goal), the controller gets rewarded. If the result is bad (it fell, it missed the target), the controller gets corrected. Repeat ten million times and you have a controller that handles situations its designers never explicitly programmed.

The breakthrough was not the algorithm. It was the speed at which you could run the experiments. Modern reinforcement learning systems train inside physics simulators — thousands of virtual robots running at once on a single GPU, each falling and recovering and learning. According to NVIDIA Isaac Lab documentation, a humanoid walking policy that would take years to train on real hardware can be trained in days inside the simulator. The result is a controller that handles uneven floors, surprise pushes, and recovery from near-falls because it has seen all of them virtually.

Then the controller gets transferred to the real robot. That is the next problem.

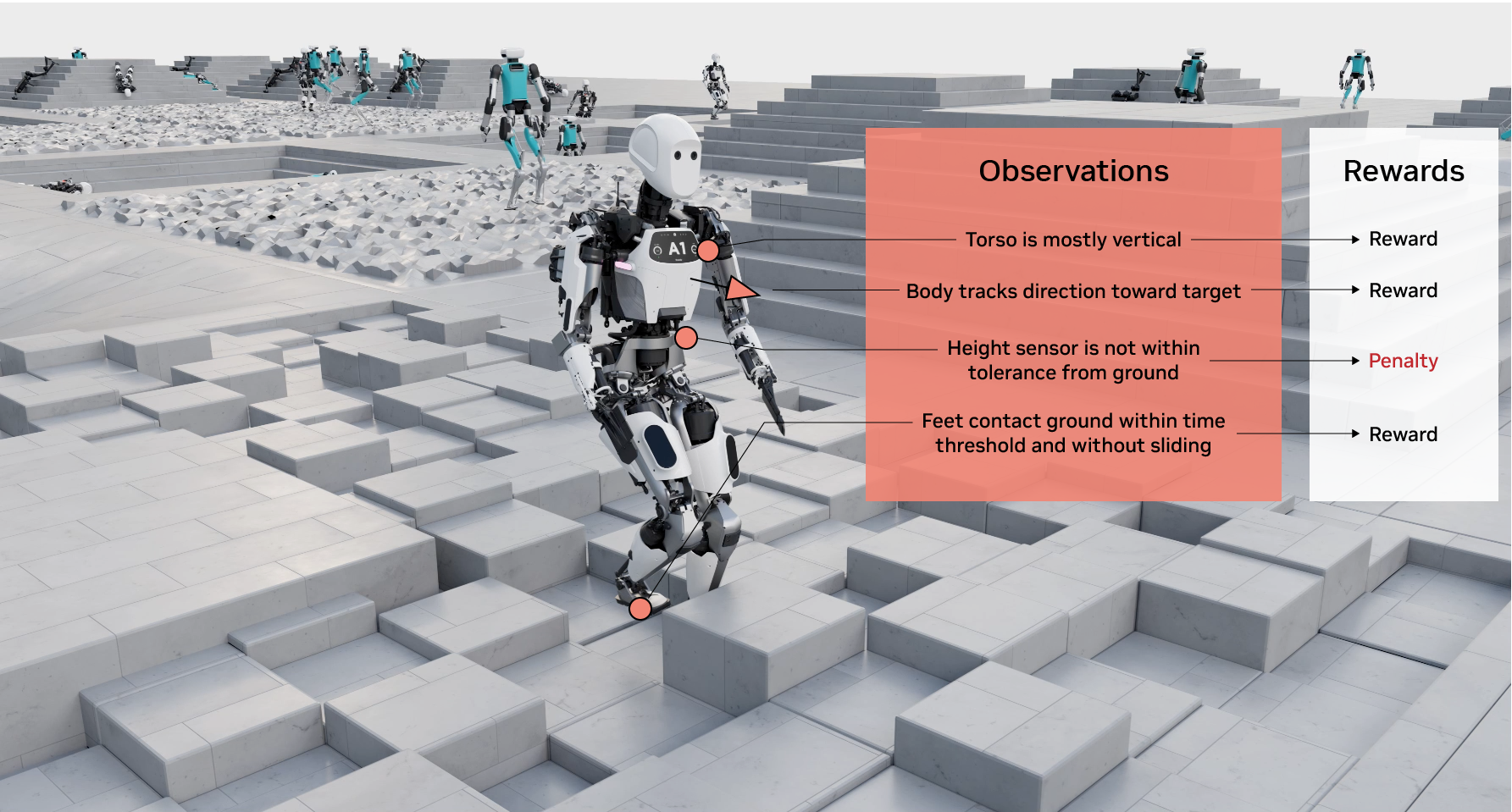

The Simulator That Runs Millions of Falls

You cannot run ten million falls on a real robot. The hardware breaks. The cost is impossible. So almost all modern humanoid training happens in simulation.

The major simulators in use as of 2026 are NVIDIA Isaac Sim, MuJoCo, Drake, and Unity ML-Agents. Each models the physics of the robot, the floor, the balance forces, and the joint torques accurately enough that a controller trained in the simulator usually transfers to the real robot.

The simulator is also where engineers test edge cases that would be irresponsible to run on physical hardware. Pushing the robot from behind. Tilting the floor 15 degrees. Removing one motor mid-step. Replacing the foot with a slightly heavier prototype. All of these get tested virtually first. The robot only sees them in physical reality after the controller has handled them in simulation thousands of times.

If you watch a Boston Dynamics, Unitree, or Figure AI demo where the robot recovers from being shoved or knocked off balance, that recovery was learned in simulation long before the demo. The simulator is the gym. The stage is just the performance.

Image source: The Construct / humanoid robot reinforcement learning training

Sim-to-real transfer is the unsolved problem at the heart of humanoid robotics, and the reason early robots fell more than late ones.

The simulator is a physics model. The real world is physics. Those two are close but not identical. The motors do not produce exactly the torque the simulator predicts. The floor friction is slightly different from session to session. The cameras pick up reflections the simulator did not render. Every small mismatch becomes a balance challenge.

Modern teams handle this with domain randomization — training the robot in simulators that intentionally vary the friction, the motor torques, the lighting, and the noise. A controller that handles randomized simulators handles real-world variation more reliably. The cost is more training time. The benefit is a robot that walks across an unfamiliar floor on the first try.

Even with all this, the first walk on real hardware still has surprises. Engineering teams budget for adjustment time after sim-to-real transfer. The robot is the prototype. The simulator was the rehearsal.

Motion Capture: Borrowing From Hollywood

The same motion-capture technology that animated the original Lord of the Rings movies trains modern humanoid robots. The pipeline is similar. A performer wears a suit covered in reflective markers. Cameras around the room capture the markers at high frame rate. The motion gets digitized as joint angles.

For robotics, that motion-capture data becomes a target. The robot’s controller learns to produce the same joint trajectories using its own actuators. The viral dance routines, the parkour-style demos, the choreographed fight scenes — many of them started as motion capture sessions with human performers, then got transferred to the robot through the imitation learning pipeline above.

This is part of why the demos look so good. A skilled performer’s motion is what you are watching, replayed by the robot. The robot did not invent the dance. The robot is performing it. Both are real.

Image source: Wikipedia / Honda ASIMO historical reference

If walking is so important, and the techniques have been around for years, why did it take three decades?

The answer is in three parts: compute, sensors, and money. Reinforcement learning needed GPUs that did not exist in 1995. Inertial sensors precise enough for real-time balance estimation got cheap only in the 2010s. And the funding to run massive parallel simulator training programs only arrived after Tesla, Microsoft, and OpenAI signaled that humanoid robots were a serious investment category in 2022 to 2024.

Honda ASIMO, the first famous humanoid that could walk reliably, took 15 years and reportedly hundreds of millions of dollars to develop. The Unitree G1 in 2025 cost a fraction of that to develop and walks comparably well. The progress between the two is not just technical — it is the result of every other team in the world picking up where Honda left off, sharing techniques, and building on top of the last 30 years of falls.

The 2026 humanoid robot stands on top of the failures of 30 years of teams that came before it. That is the unromantic version of the breakthrough.

What This Means for the Robot You Are Booking

The Unitree G1 you book through ZMP robots is the result of all of the above. Imitation learning trained the gestures. Reinforcement learning trained the balance. Simulators trained the recovery from disturbances. Motion capture trained the dance routine you saw in the demo video.

You do not need to know any of this to book the robot. You do need to know one practical thing: the robot is most reliable inside the conditions it was trained on. Smooth floors, predictable lighting, supervised crowds, indoor venues. Push it outside that envelope and the simulator’s model breaks down. Stay inside the envelope and the robot performs the way the demo videos suggest.

Most modern event humanoids have been trained on the conditions you are likely to deploy them into. That is not an accident. The training set was designed for the application.

FAQ

How does a humanoid robot actually learn to walk?

Through a combination of imitation learning (watching a human or a recorded motion), reinforcement learning (trying motions in simulation and getting reward signals for staying balanced), and sim-to-real transfer. The robot rarely learns on physical hardware first — nearly all early training happens in simulators that model the physics of walking accurately.

What is reinforcement learning in robotics?

Reinforcement learning is a machine learning technique where a controller tries motions and receives reward signals based on outcomes. Good motions (staying upright, reaching a target) reinforce the underlying policy. Bad motions (falling, missing) get corrected. Modern humanoid teams run millions of training cycles in simulation to produce policies that handle real-world variation.

What is sim-to-real transfer?

The process of moving a controller trained in a physics simulator onto a physical robot. The simulator is a close but imperfect model of the real world. Sim-to-real techniques like domain randomization train robots in simulators that intentionally vary friction, motor characteristics, and noise so the resulting controller handles real-world differences.

Do humanoid robots use motion capture for training?

Yes, often. Motion capture suits worn by human performers generate reference trajectories that the robot’s controller learns to imitate. Many viral humanoid demos — dances, fights, choreographed routines — originated as motion capture sessions and were transferred to the robot through imitation learning.

Why did walking take so long to solve in robotics?

Three reasons: compute (GPUs powerful enough for fast training only became affordable in the 2010s), sensors (inertial sensors precise enough for real-time balance estimation got cheap only recently), and funding (massive simulator training programs needed venture capital that only arrived after 2022). Honda ASIMO took roughly 15 years and hundreds of millions of dollars. Modern teams replicate that capability in much less time.

Can a humanoid robot learn from one demonstration?

Sometimes, for simple motions like a wave or a pose. Complex behaviors generally require thousands to millions of repetitions in simulation, plus tuning on the physical robot. The marketing line “robot learns from one example” is technically possible for narrow motions but should be treated skeptically when applied to anything more complex.

The Bottom Line

A humanoid robot learns to walk through imitation, reinforcement, simulation, and a lot of falling. The Unitree G1 you can rent today is the product of 30 years of progress in machine learning and physics modeling. The result: a robot that walks calmly across your trade show floor on the first take.

Want to see what 30 years of robotics research looks like at your event? See availability on our humanoid robot rental page.